Technical Content Writer Agentic Platform

Agentic AI system for automated technical blog generation using LangGraph, Streamlit, and LLMs.

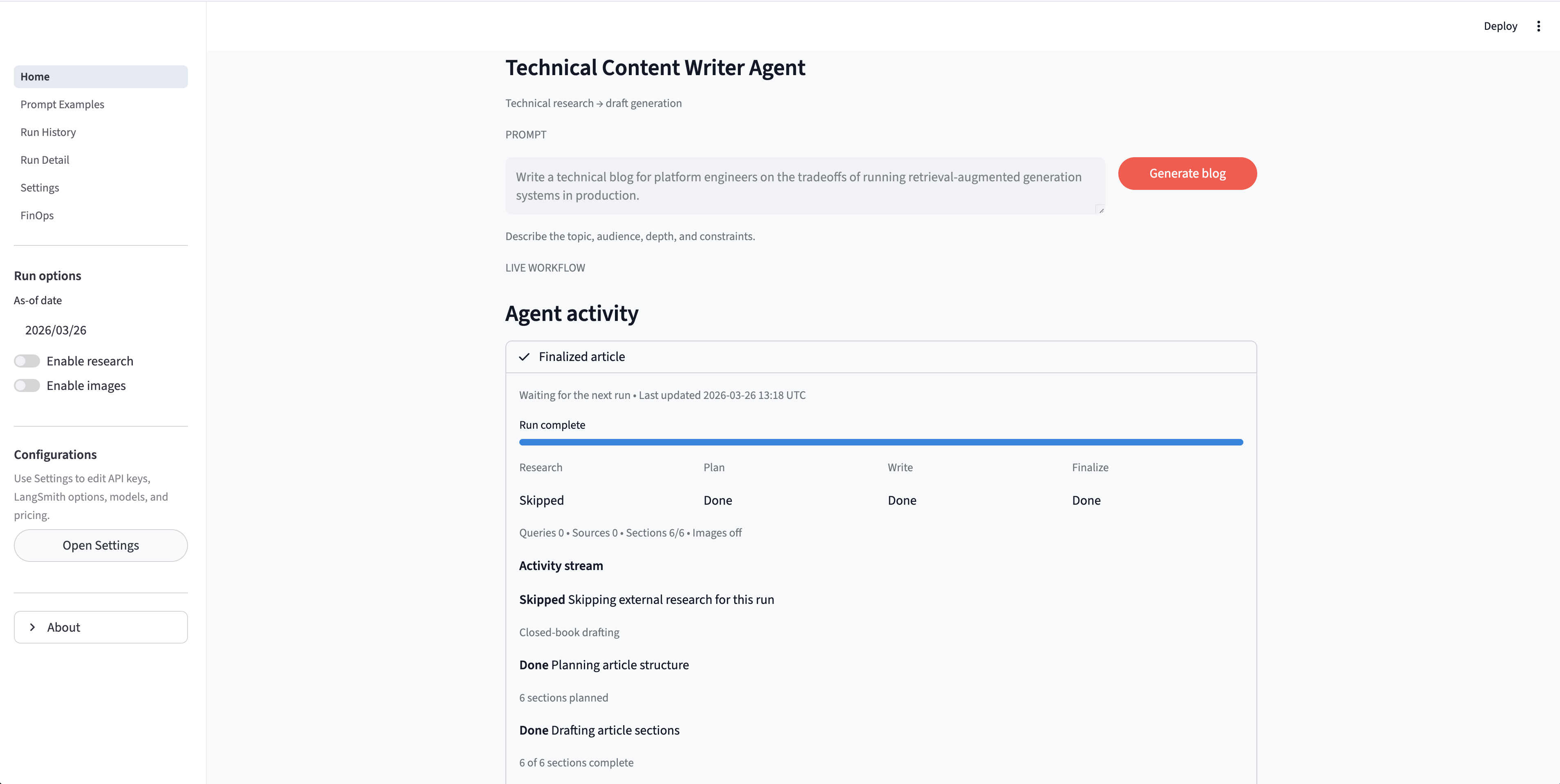

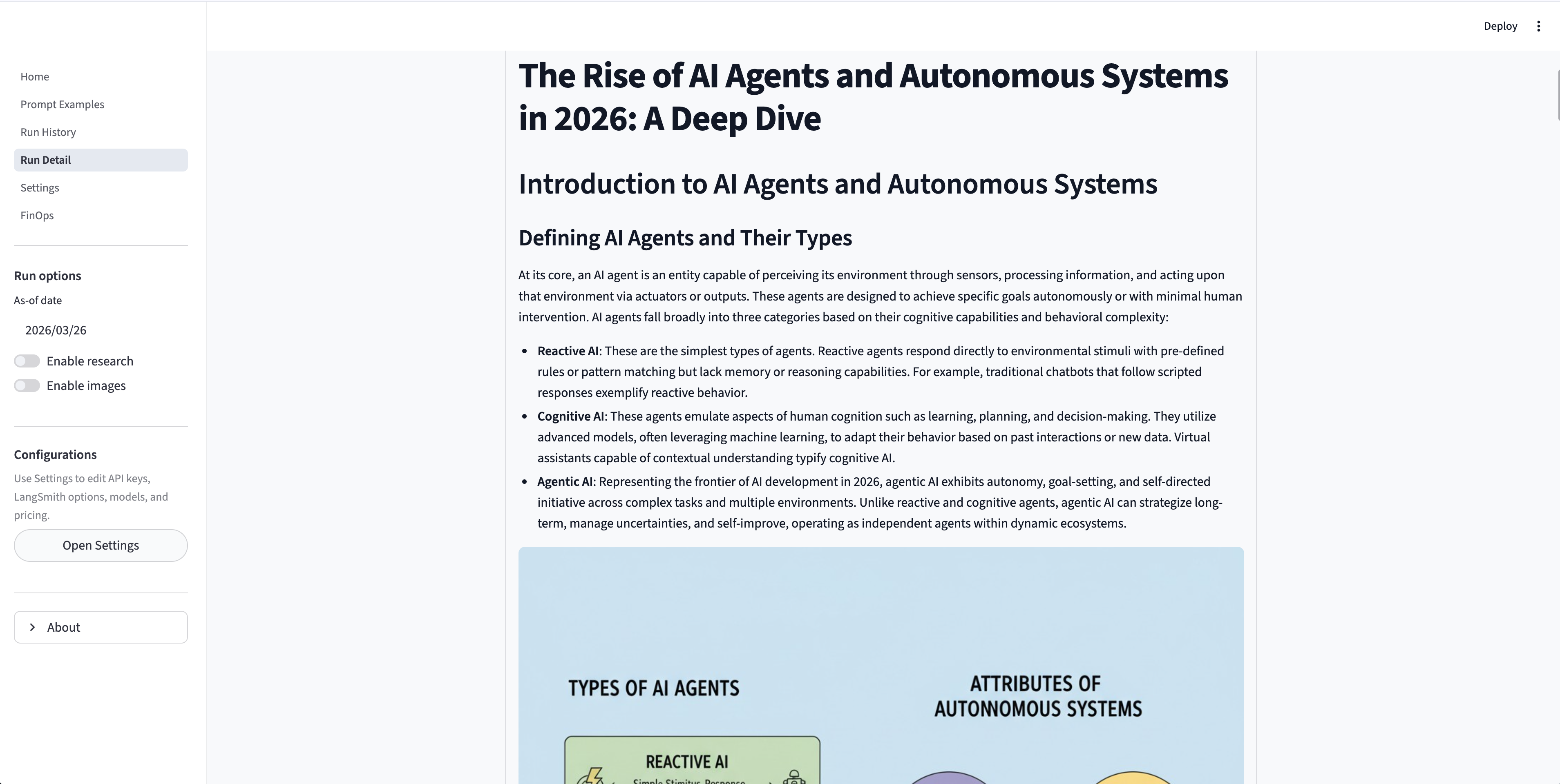

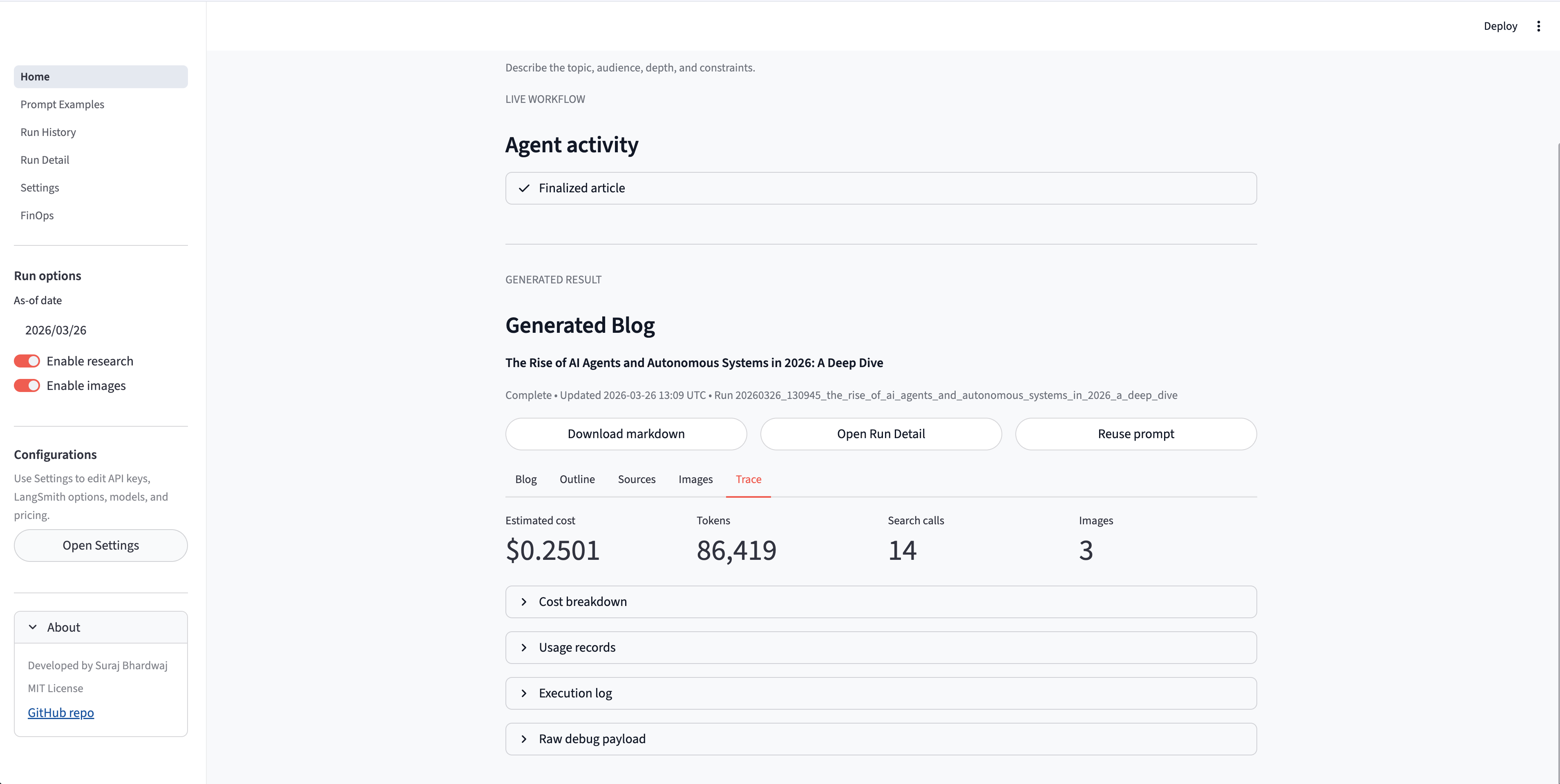

Deep Blog Agent (Technical Content Writer Agent) is a Streamlit + LangGraph based agentic AI system designed to generate high-quality technical blog posts from a short prompt or topic. It combines structured planning, web research, long-form content synthesis, and optional image generation into a single reproducible workflow.

| 👉 Live Demo (Streamlit) | 👉 GitHub Repository | 👉 YouTube Demo |

Overview

This project solves a common problem in technical writing:

How to quickly generate a structured, research-backed draft while maintaining transparency and reproducibility.

The system provides:

- A Streamlit workspace with live workflow visibility

- A LangGraph-based multi-stage pipeline

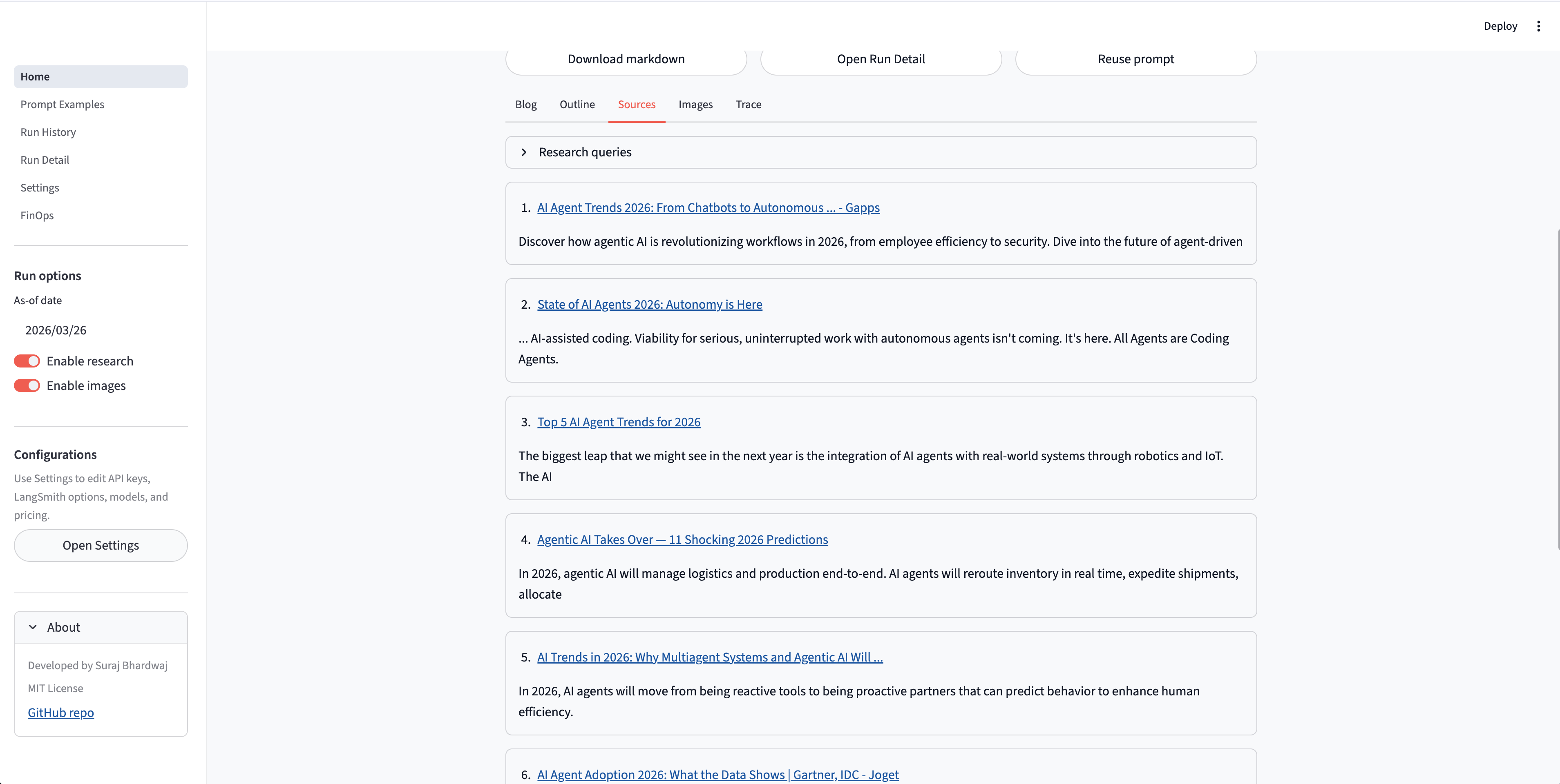

- Optional web research using Tavily

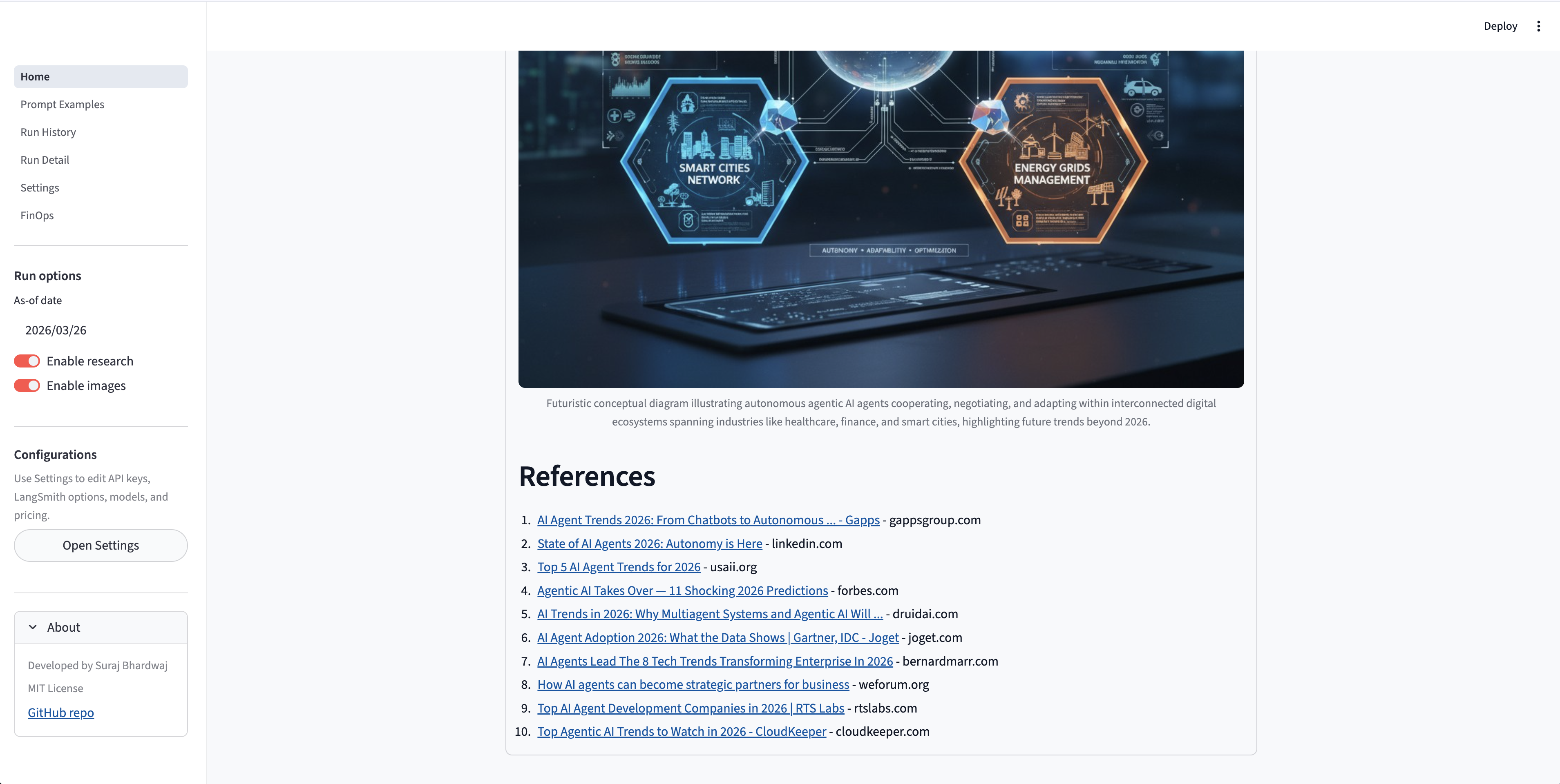

- Optional image generation for diagrams

- Persistent artifact storage for reproducibility

System Workflow

Each run follows a structured pipeline:

- Accept a topic or prompt

- Decide whether research is needed

- Retrieve and process evidence

- Generate structured outline

- Draft sections in parallel

- Assemble final markdown

- Optionally generate and embed images

- Save outputs as reusable artifacts

Architecture (Agentic Pipeline)

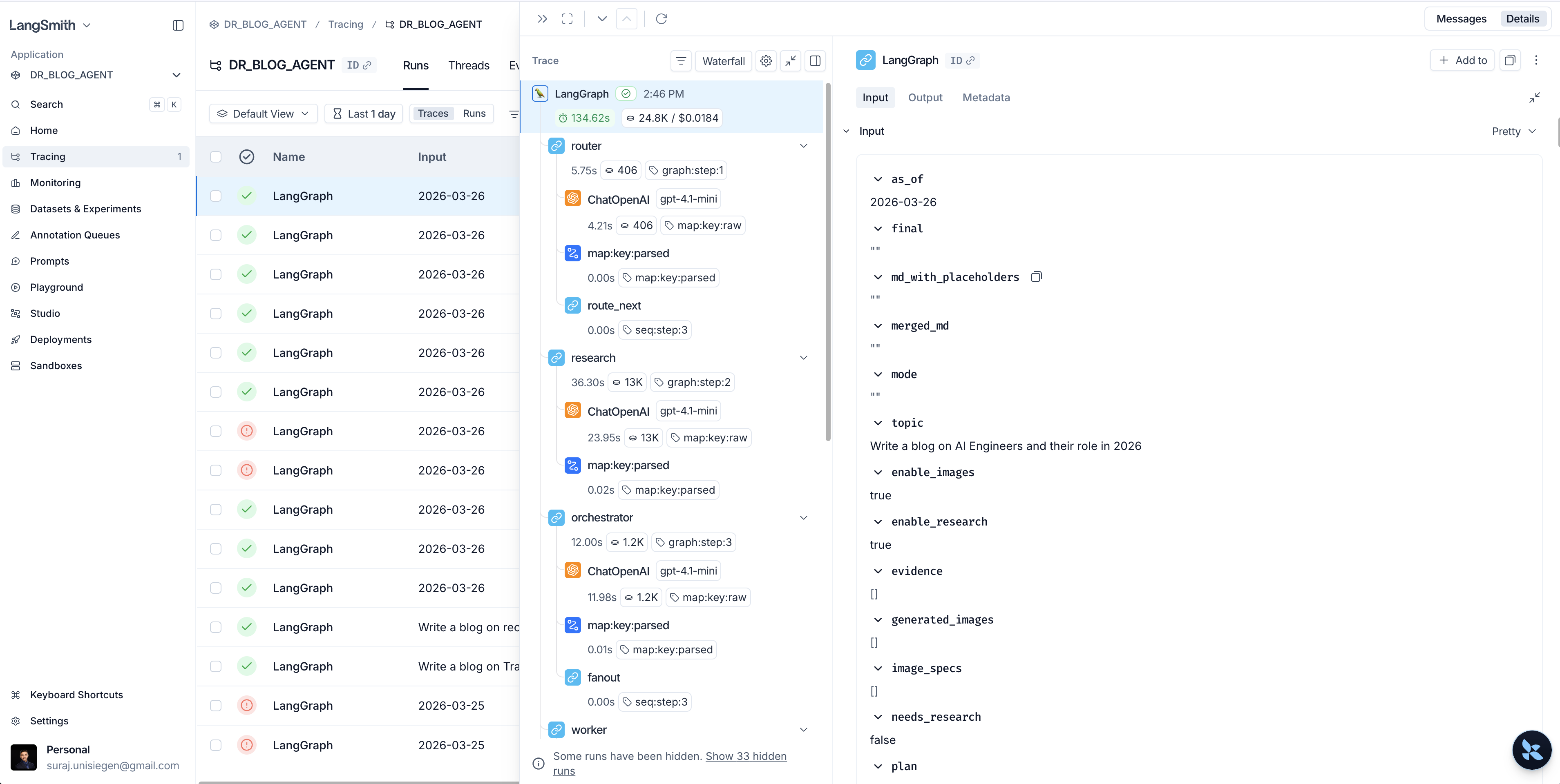

The system is implemented using LangGraph, enabling modular and observable workflows.

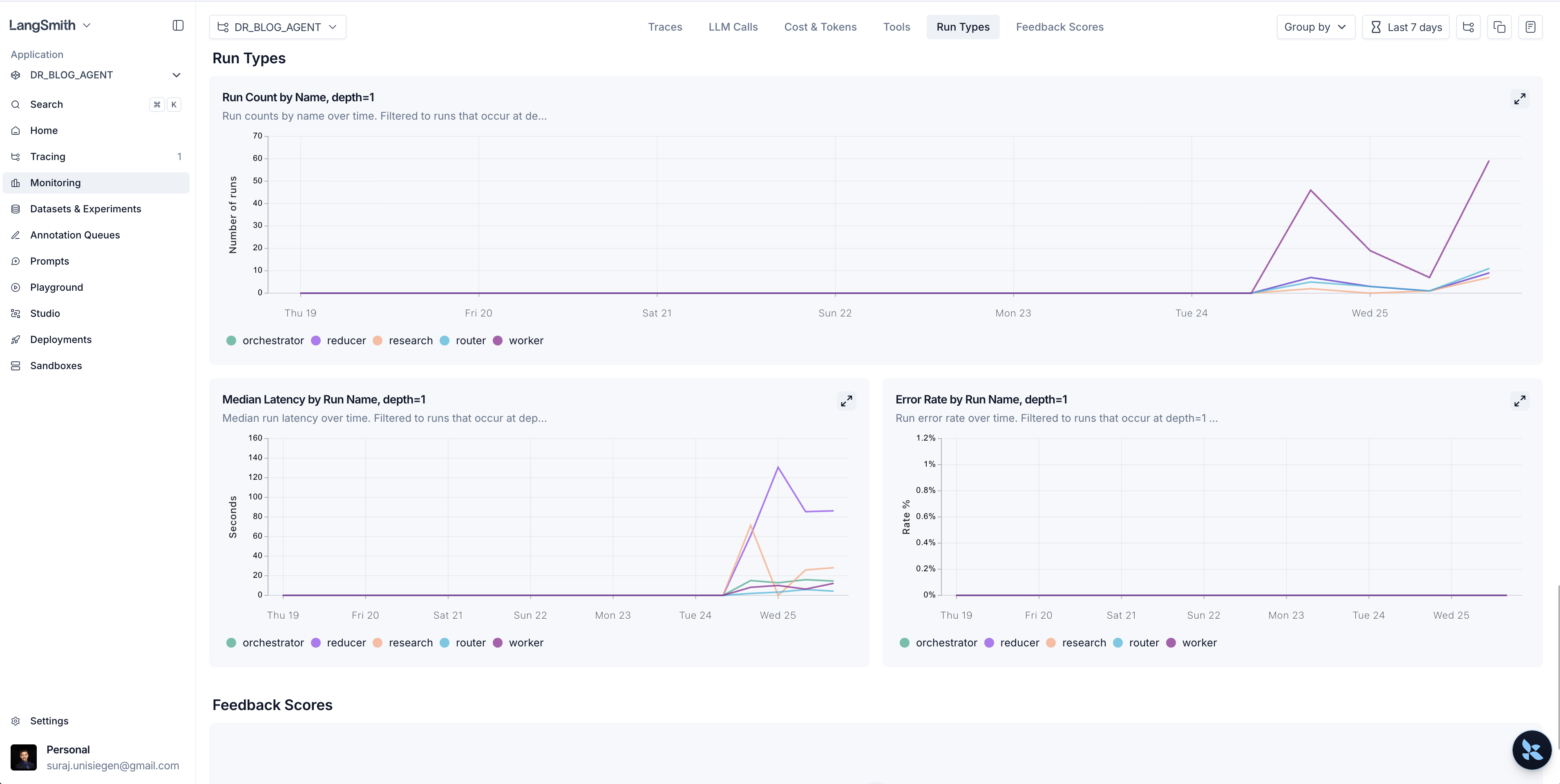

Router → Research → Orchestrator → Workers → Reducer

- Router: decides research vs closed-book mode

- Research Node: retrieves external knowledge (Tavily)

- Orchestrator: builds structured plan (Pydantic schema)

- Workers: generate sections in parallel

- Reducer: combines outputs into final blog

Key Features

Agentic Blog Generation

- Multi-stage structured reasoning pipeline

- Evidence-grounded content generation

- Modular section-wise drafting

- Deterministic output formats

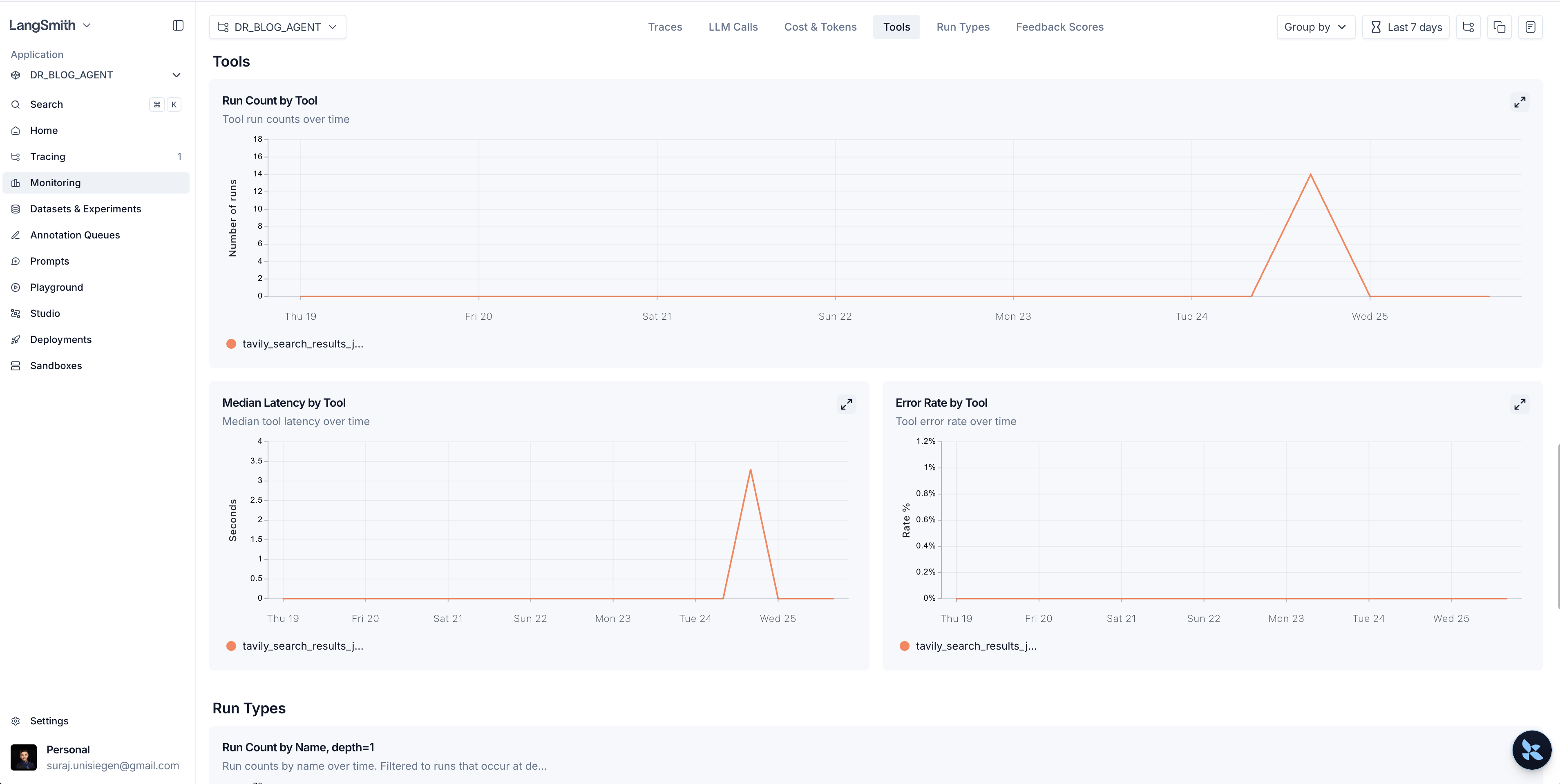

Research Integration

- Tavily-powered web search

- Citation-aware generation

- Up-to-date knowledge incorporation

UI & Observability

- Live workflow execution tracking

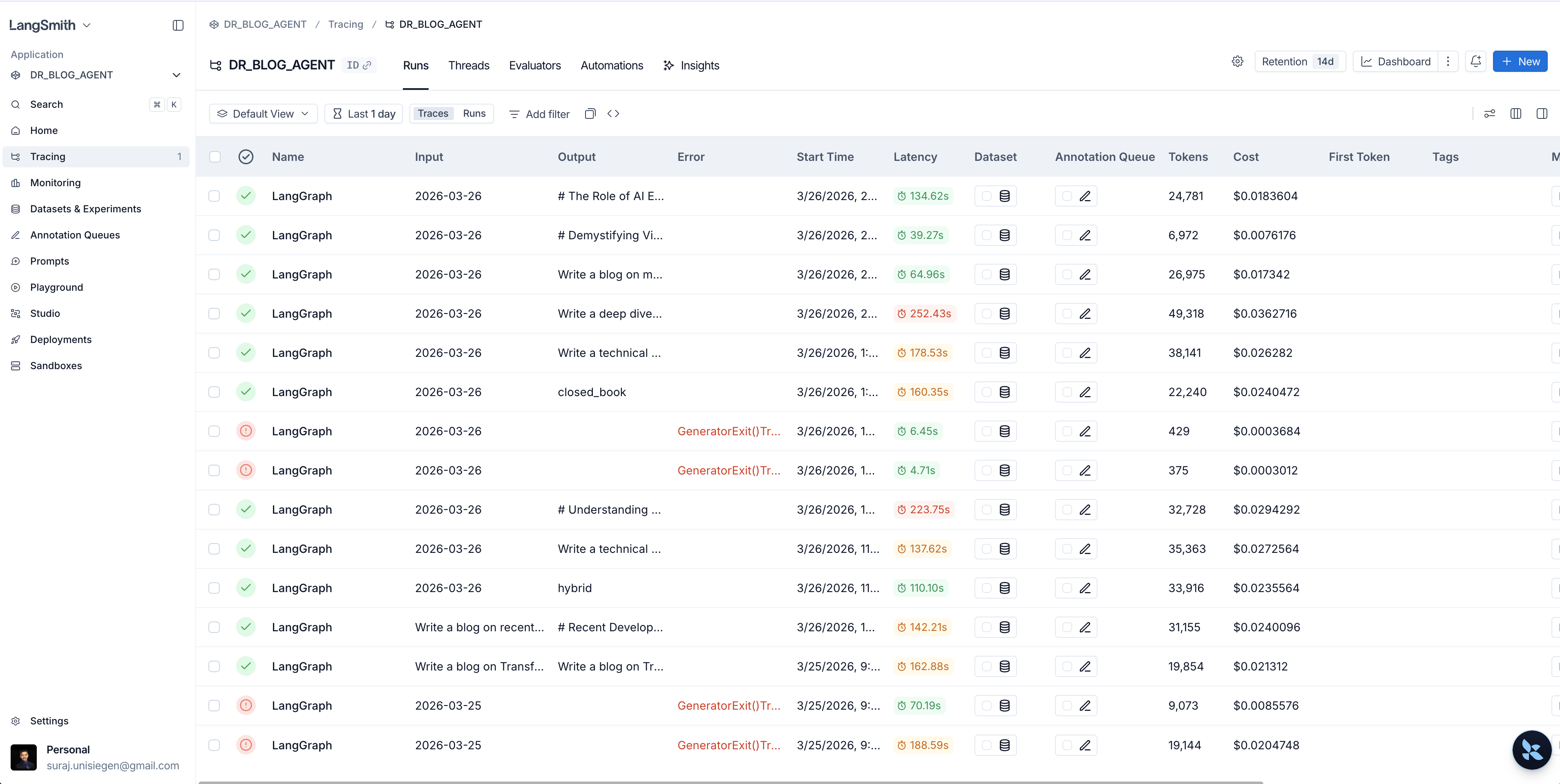

- Run history and replay

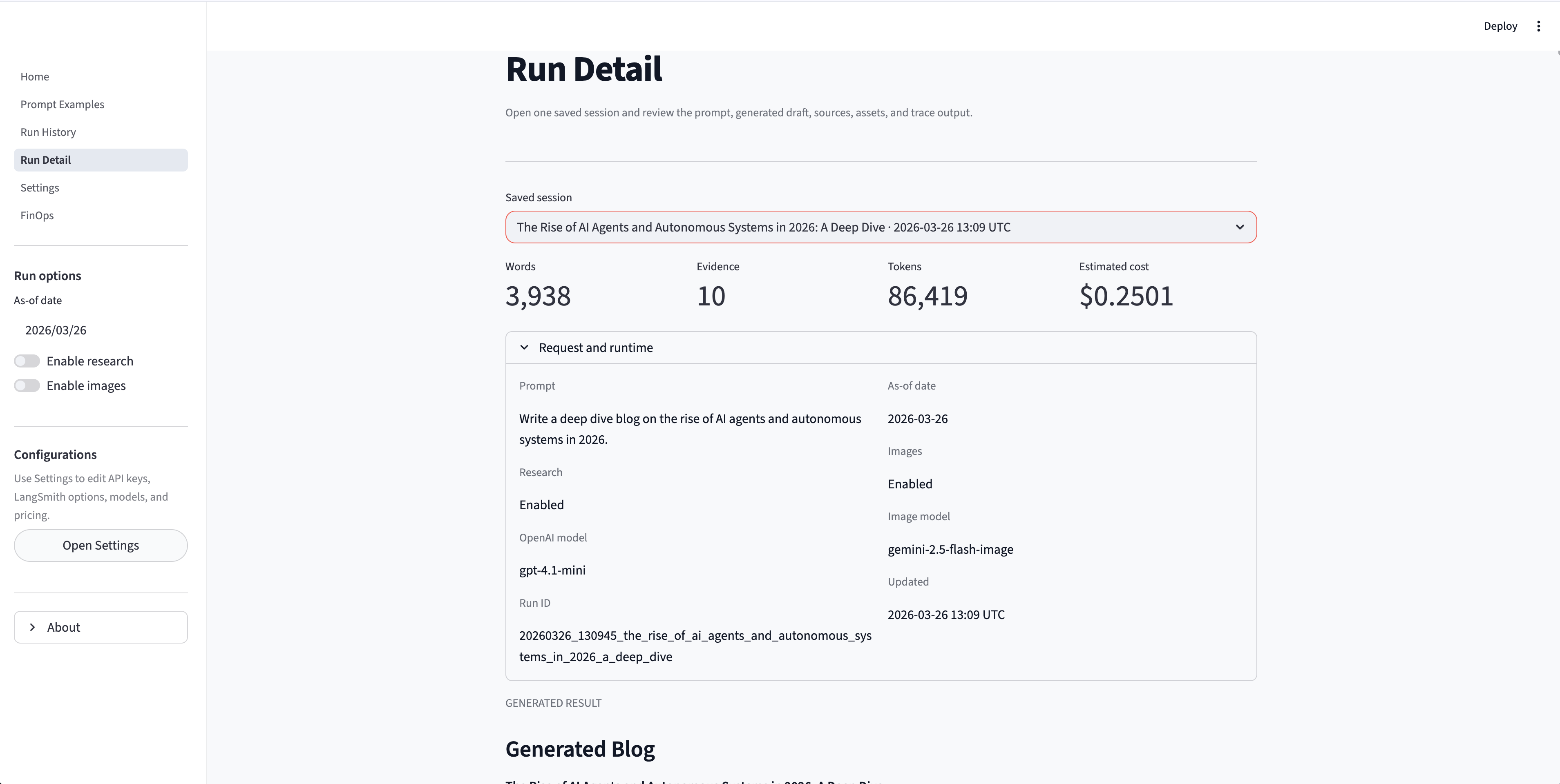

- Detailed run inspection (logs, sources, outputs)

- Transparent agent reasoning

Artifact System

Each run generates reusable outputs:

outputs/<timestamp>_<slug>/

├── blog.md

├── run.json

└── images/

- Markdown export

- Metadata tracking

- Image bundling

- Reproducibility support

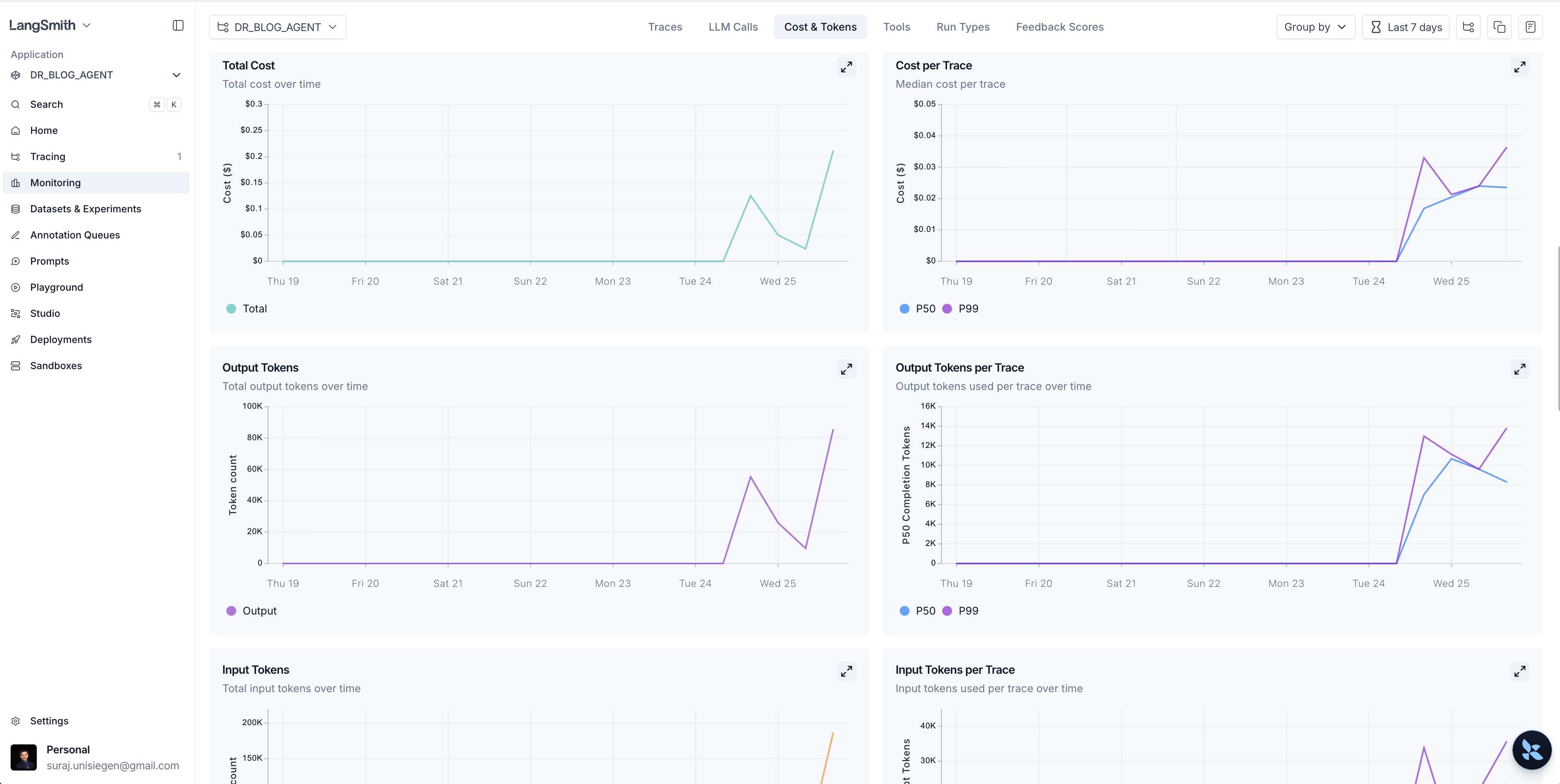

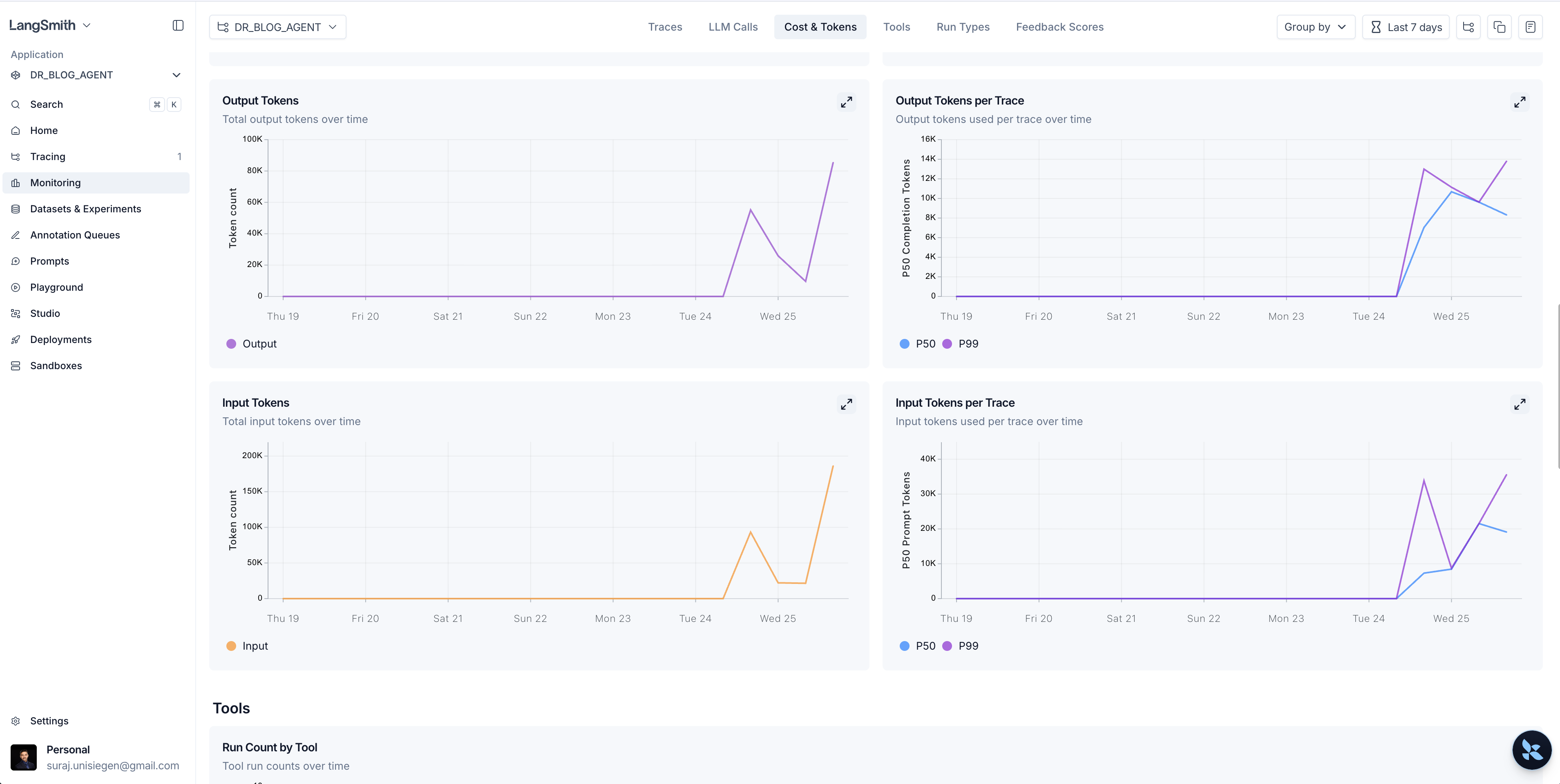

FinOps & Cost Awareness

- Token usage tracking

- Cost estimation per run

- Configurable pricing assumptions

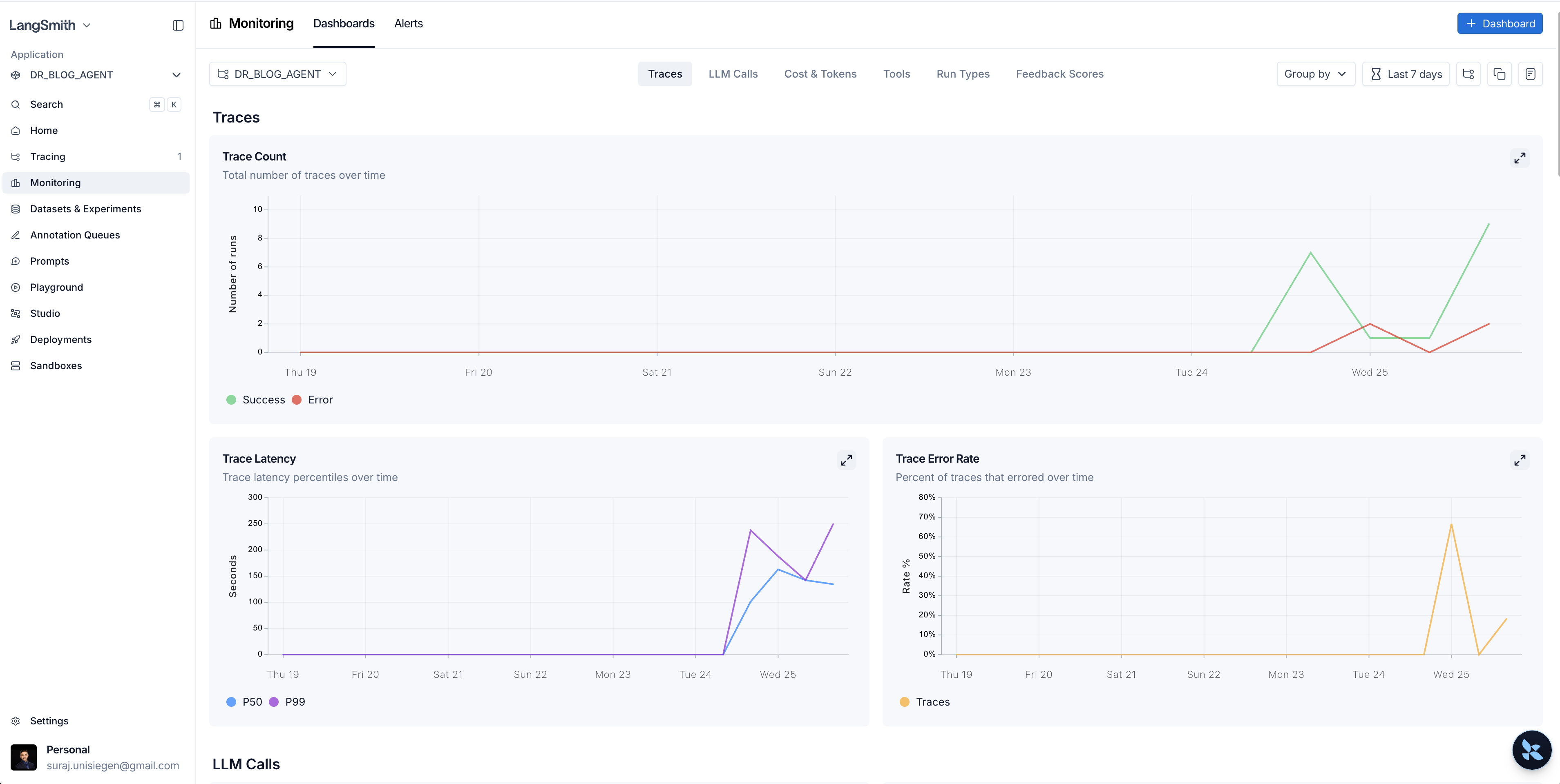

Observability & Monitoring

Streamlit Interface

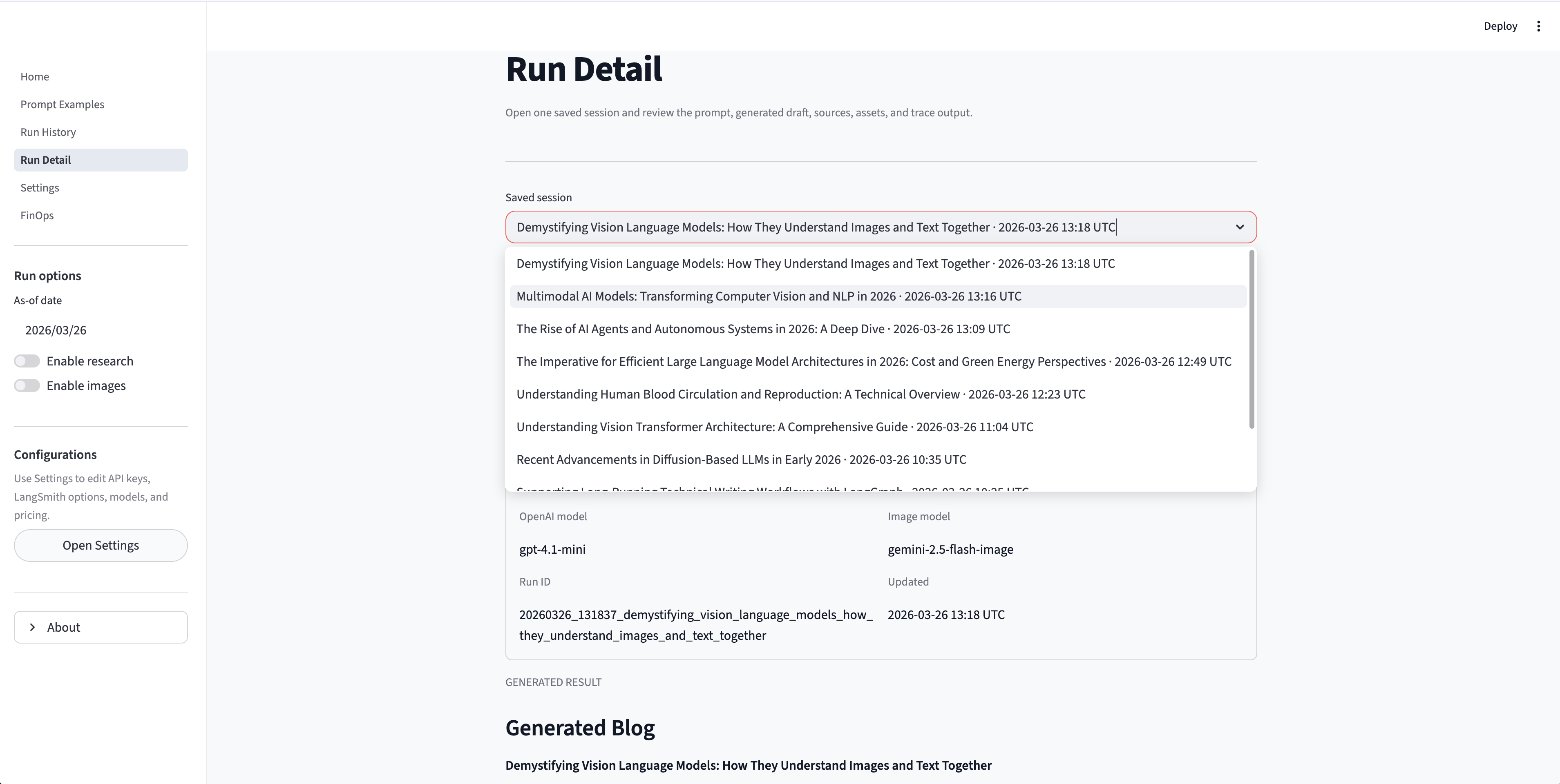

The UI is structured into multiple functional pages:

- Home → Prompt input + live workflow

- Prompt Examples → reusable prompts

- Run History → previous sessions

- Run Detail → full trace + outputs

- Settings → API keys + model config

- FinOps → cost tracking

Tech Stack

- Backend / Agents: LangGraph, LangChain

- LLMs: OpenAI (text), Google GenAI (images)

- Research: Tavily API

- Frontend: Streamlit

- Data Models: Pydantic

- Environment: Python 3.13, uv

CLI Usage

Supports both UI and CLI workflows:

deep-blog-agent “Write a technical blog on RAG evaluation in production”

Optional flags:

- –no-research

- –no-images

- –print-markdown

Implementation Highlights

- LangGraph-based modular orchestration

- Parallel section generation

- Schema-driven planning with Pydantic

- Separation of concerns (providers, core, agents, UI)

- Reproducible artifact pipeline

- Session-based API key handling in UI

What I Learned

- Designing agentic workflows with structured reasoning

- Building observable AI systems (LangSmith + logs + UI tracing)

- Integrating retrieval + generation + planning pipelines

- Managing cost-aware GenAI systems (FinOps layer)

- Creating production-ready ML/LLM applications with UI + CLI